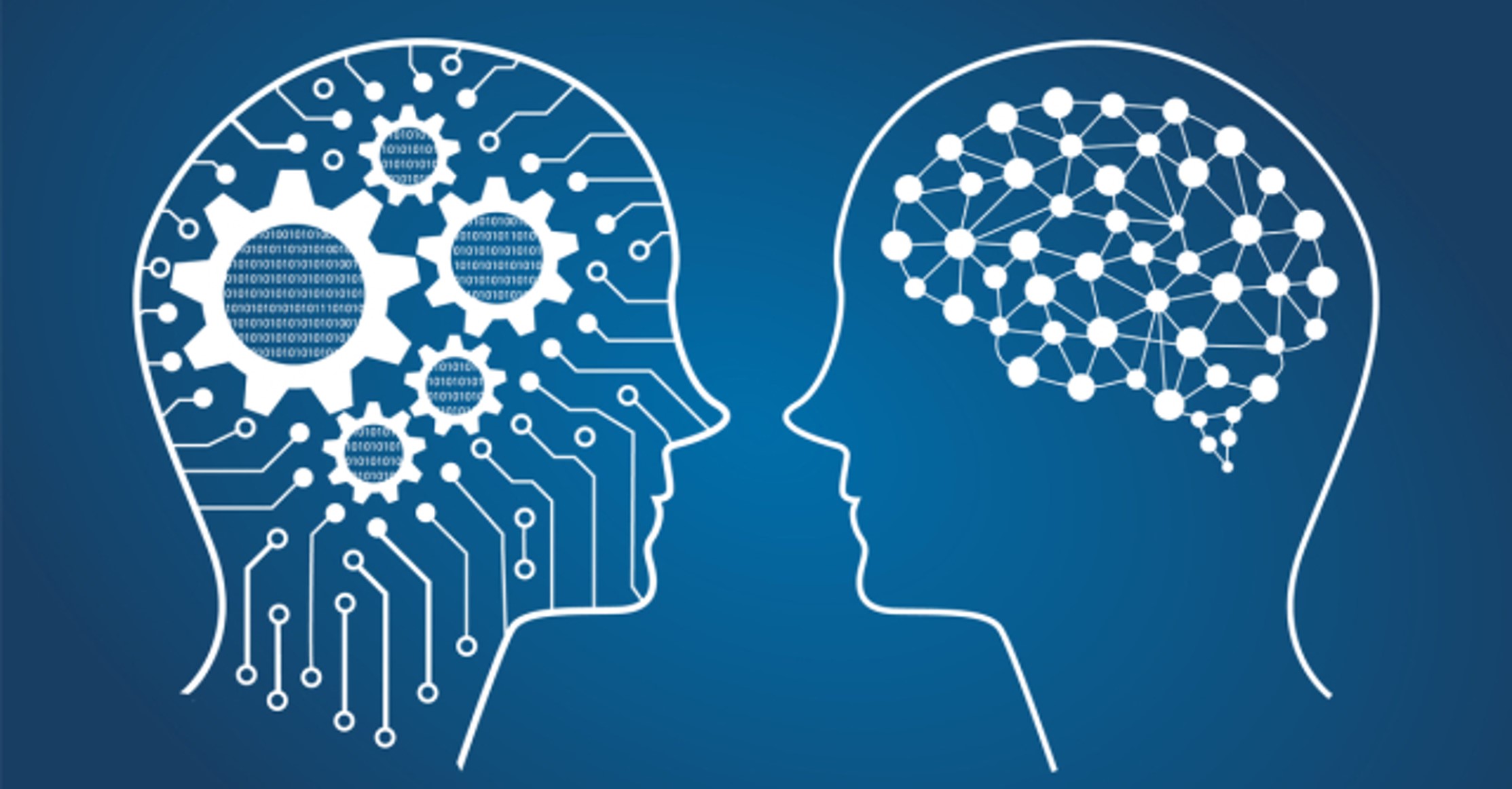

We might stop here, and call this point the important divide between the machines we have and the machines we will build in the future. However, it is better to be more specific to discuss the types of representations machines need to form, and what they need to be about.

Machines in the next, more advanced, class not only form representations about the world, but also about other agents or entities in the world. In psychology, this is called "theory of mind" – the understanding that people, creatures and objects in the world can have thoughts and emotions that affect their own behavior.

This is crucial to how we humans formed societies, because they allowed us to have social interactions. Without understanding each other's motives and intentions, and without taking into account what somebody else knows either about me or the environment, working together is at best difficult, at worst impossible.

If AI systems are indeed ever to walk among us, they'll have to be able to understand that each of us has thoughts and feelings and expectations for how we'll be treated. And they'll have to adjust their behavior accordingly.